It’s possible that you'll see an error message while trying this expression. The created visualization can be placed in a nice dashboard where other visualizations of logs are shown. Remember that we created an index per day? By using * we make sure that we can see all logs for all days together.Īfter creating this index pattern, we are already able to see and search logs for this particular application and appender. In our case the index pattern will be prod_logs_awemsome-application_requests_*. This can be done by following this tutorial. Using the dataįirst we have to create our index pattern. In the next chapter we'll show how this data can be used to create visualizations and dashboards. Example: prod_logs_awemsome-application_requests_2019.06.18. We create an index per environment, application, appender and date. The output part decides where our Elasticsearch is hosted and defines the index. The filter part contains a Grok filter to format the output. In the input part we'll add extra fields to add information about our environment. To keep a better overview, we will keep the examples to requestlogs.įirst thing we wanted to share in our library were the Logback appenders: elk-appenders.xml In our applications we send different kind of logs: requests, errors, HikariCP pool status. Luckily this piece of the puzzle had already been invented: the logstash-logback-encoder provides encoders, layouts and appenders to log JSON directly to Logstash. So we had to find a way to send our logs directly to Logstash, which would process the logs and send it to Elasticsearch. We often use Logback as logging framework. Each application can use this shared library, which also ensures that all logging is in the same format. So we chose to embed most of the logic in a 'shared library'. While this is surely a valid option, we didn't want to configure Filebeat every single time we created a new application.

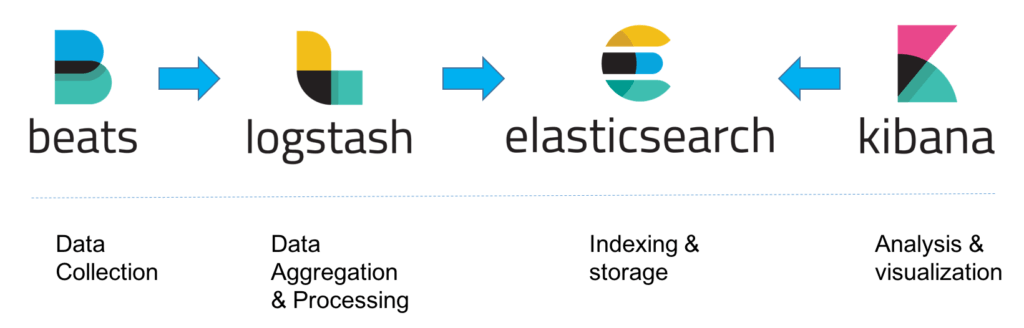

One of the proposed options is to ship logfiles directly with Beats (Filebeat). Logstash would filter and transform this input to a standard format and on would send the output to Elasticsearch in turn so it's ready to be searched and visualized. The typical process to ship logs to Elasticsearch works as follows: Beats (Filebeat in particular) sends output from a logfile to Logstash.

For more information on visualisations and dashboards, click here to skip to the next chapter. Examples will be based on that tech stack. More at /elk-stack.Īll our applications are based on Java and Spring Boot, using Logback as logging framework. Since Beats was added recently, the ELK stack has been renamed to Elastic Stack. While ELK is mainly used to facilitate search solutions, it can also be used to centralize logs from different applications.

Logstash is best understood as a powerful ingest pipeline and Kibana is the visualization layer. Elasticsearch is a distributed, RESTful, JSON-based search engine. ELK stackĮLK is an acronym that stands for Elasticsearch, Logstash and Kibana. Hence the centralized system that gathers logs. In some cases, the instance could already be gone, which would mean that the logfiles are gone too. However, since the instances on which the application is deployed are very volatile, it would be a pain to get the logfiles. So it is really important to know the root cause, which you'll know only for sure if you can monitor the logs. If an application becomes unhealthy it is important to know the exact reason: maybe the database is unreachable or there is a memory leak, maybe a bug even slipped through to production (hypothetically, of course). An application could become unhealthy for any reason and could be deployed on a new instance in the cloud automatically. One of the drawbacks we immediately encountered is the fact that you'll never know for sure where an application is hosted. While this has many advantages, it also has some drawbacks. Each of these microservices is deployed in a cloud environment (AWS). Why would you need a centralized system that gathers logs? We recently switched one of our projects from an architecture with a dozen monoliths to a microservices based approach.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed